Introduction and summary

Federal school improvement policies, by design, have historically targeted the schools struggling the most. Under the No Child Left Behind Act (NCLB), states were required to identify and support chools that failed to meet benchmarks, which led to overclassification and thinly spread resources. Beginning in 2012, however, under the Obama administration’s waivers from NCLB, states instead have identified schools that performed in the lowest 5 to 15 percent statewide—a shifted focus to target resources that was driven in part by limited state capacity. The latest iteration of federal law maintains the requirement that states identify the lowest-performing schools, but now, districts will be responsible for intervening where schools need the most support. As a result, the Every Student Succeeds Act (ESSA) largely shifts the school support role from state to district hands.

Importantly, states will continue to play a role in supporting schools that they have identified for improvement under ESSA. Yet, given districts’ local locus of control and prime positioning as change agents, they have greater capacity to create systems that support all schools.1 This broadening of district support, however, does not have to come at the expense of schools that are struggling to make gains. Rather, districts can create, implement, and sustain systems that help all schools—not just those at the bottom—to continuously improve.

Denver Public Schools (DPS) provides a window into this work. As the largest school district in Colorado, DPS represents 10 percent of the state’s K-12 student population.2 The district uses a school rating system called the School Performance Framework (SPF) to inform its Tiered Support Framework (TSF), which categorizes all district-run schools into three levels of support: universal, strategic, and intensive.3 The universal tier includes foundational supports available to all schools, whereas school-specific improvement plans inform supports for schools in the strategic tier. Schools in the intensive tier receive district support for accelerated improvement and school redesign.

Both the district’s school rating system and the TSF are unique to Denver, which leverages its district capacity to provide supports for all schools while ensuring that higher-need schools receive additional and differentiated supports and resources.4 Altogether, the district identifies, plans, supports, and monitors school performance in order to help all schools improve.

By analyzing the academic growth of DPS schools over time, one can better understand how the district’s framework supports school performance. Comparing the growth of lower- and higher-performing DPS schools with the rest of Colorado and the growth of DPS schools receiving more rigorous supports with that of peer schools across other districts yields particularly promising results. Not only did Denver schools show greater growth than the rest of the state in recent years, but analyses suggest that DPS schools in need of additional tiered supports made greater gains than similar schools in Colorado. Specifically:

- In recent years, all DPS schools—including both higher- and lower-performing schools—showed greater growth than the rest of the state, particularly in math.

- When controlling for school demographics, students in DPS schools receiving more rigorous supports in the strategic or intensive tiers showed comparable gains in ELA and math relative to students in higher-performing DPS schools.

- DPS elementary schools receiving strategic or intensive tier supports showed greater schoolwide gains—in addition to greater gains for Hispanic students and students eligible for free or reduced-price lunch—as compared with similar schools in the state.

- When compared with peer schools, however, DPS elementary schools receiving strategic or intensive tier supports had larger achievement gaps between students who were and were not eligible for free or reduced-price lunch.

Importantly, DPS’ Tiered Support Framework is not the extent of the district’s school improvement efforts. The district is using a variety of strategies to increase school quality and close opportunity gaps, including investing in early learning opportunities, expanding high-quality school options throughout the district, and developing strong pipelines for teacher leadership.5 The district’s gains therefore cannot be attributed to its tiered supports alone, but an analysis of the framework and school progress can provide a window into DPS’ efforts and outcomes.

Accordingly, this report will explore how Denver’s school rating and improvement systems support school performance across the spectrum. Although academic achievement is not the only barometer of school performance, the analysis in this report will use test scores and student growth as outcome measures, given the significant role these metrics play in Denver’s school rating system and the availability and simplicity of data.6 The results of this analysis suggest that other districts can learn important lessons from Denver’s approach to continuous improvement for all schools.

Overview of DPS school accountability and improvement systems

Denver Public Schools has an accountability and support system that is unique to the district and aligns with the DPS vision of school quality. The district annually classifies all schools into five performance categories, which it uses to help determine which of the three tiers of supports each district-run school receives. DPS supports alternative schools—schools that serve large percentages of high-risk students or students with special needs—through a unique accountability, support, and funding structure and uses a separate tiering process for charter schools.7 As a result, alternative schools, charter schools, and their tiering processes are excluded from the analysis.

In addition to a district rating and a support tier, Denver schools receive a classification under Colorado’s statewide accountability system, which assigns schools to one of four school performance framework plans: “performance,” “improvement,” “priority improvement,” or “turnaround.” Denver goes through an annual review process with the state in order to align the state and district school classifications.8

In the 2014-15 school year, Colorado administered new assessments, the Colorado Measures of Academic Success (CMAS). As a result, that year, both the state and Denver did not classify schools using their respective rating systems; school ratings data at the state and district level are available for the 2015-16 and 2016-17 school years.9 In compliance with ESSA accountability requirements, Colorado has also identified schools for comprehensive support and improvement or targeted support and improvement.10

Identification process

Denver Public Schools annually classifies school performance using five performance ratings: “distinguished,” “meets expectations,” “accredited on watch,” “accredited on priority watch,” and “accredited on probation.”11 These school performance determinations are based on a combination of measures, including:

- Academic achievement and growth from student assessments, including state assessments in English language arts (ELA), math and science; college and career readiness assessments such as the PSAT and SAT; early literacy assessments; and English language proficiency assessments

- Student satisfaction survey results; parent satisfaction and engagement survey results; and student attendance rates

- Postsecondary readiness status and growth, which include PSAT and SAT results; graduation and dropout rates; remediation rates; and results from Advanced Placement, International Baccalaureate, and concurrent enrollment courses

- Academic gaps between historically underserved students and their peers12

In 2017, the most recent year for which DPS school ratings data are available, the district classified half of schools as “meets expectations”—up from about 40 percent the previous year. In both 2016 and 2017, DPS classified about one-third of schools as “accredited on watch,” and between 2016 and 2017, it significantly reduced the number of schools that were “accredited on probation.” (see Figure 1 below) Notably, in response to community concerns that the district inflated scores by overstating gains on early literacy assessments, the district has proposed changes to how it calculates school ratings, which tend to shift at least slightly on an annual basis.13

Denver uses these school ratings and other key data to categorize district-run schools into the three support tiers that make up the district’s Tiered Support Framework. According to the district, the universal tier focuses on continuous improvement to move schools from “good” to “great.” The goal of the strategic tier is to reverse the trajectory of higher-risk schools through preventative supports and resources. Lastly, the intensive tier provides the highest level of support for schools, using school turnaround or other improvement and intervention strategies.14

Overall, TSF identification involves three steps. First, the district reviews school ratings and indicators that correlate with future performance, such as voluntary teacher turnover, annual climate survey teacher feedback, and parent and student satisfaction. Second, where needed, the district performs a qualitative assessment of school culture and instructional structures. Third, based on data gleaned from the first two steps, district leadership makes recommendations on final tier placement.15

This identification process occurs each year following the release of the district SPF, typically by the end of September or mid-October.16 For example, in fall of 2016, using the 2016 school ratings and other indicators described above, the district identified the TSF tier for each school, which guided improvement planning and supports for the remainder of the 2016-17 school year as well as for the 2017-18 school year.17 During the 2016-17 school year, schools identified in the strategic or intensive tier conducted a needs analysis informed by a third-party school quality review; engaged in immediate- and long-term improvement planning; and implemented “quick-win” improvement strategies, such as targeted tutoring programs, teacher training, or social-emotional supports. In the 2017-18 school year, schools implemented their full improvement plans, which aligned time, people, and money.18

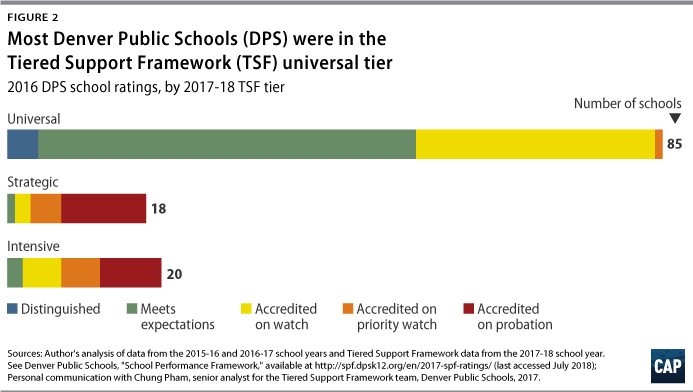

Based on 2016 school ratings, more than two-thirds of schools were identified in the universal tier for the 2017-18 school year; these schools were primarily classified as “distinguished,” “meets expectations,” or “accredited on watch.” Another third of DPS schools, from four of the five school rating categories, were split nearly evenly across the strategic and intensive tiers. Notably, because the district supports schools in the intensive tier for a minimum of five years, some schools that the district classified as “meets expectations” in 2016 continued to receive support in the intensive tier during the 2017-18 school year. (see Figure 2 below)19

Tiered supports

The universal tier outlines the supports that are available to all schools, including coaching on data-driven instruction, professional development, and community engagement programs such as the Parent Teacher Home Visit program. Strategic supports are informed by school-specific improvement plans, which can include increased time for teacher planning, school leadership team support, and wraparound services provided by external experts. Schools in the intensive tier, whose supports are driven by school-specific needs and designs, receive district support for school redesign, from redesigning critical elements of the school to redesigning the entire school. Whole school redesigns typically include replacing school leaders and staff or opening a new school in partnership with the community.20

In addition to planning and implementation support, schools in the strategic and intensive tiers may receive targeted leadership development and resources. For example, a cohort of principals and school leadership teams from schools in both tiers receives one or two years of professional development through the University of Denver, the University of Virginia, or the Relay Graduate School of Education. Both tiers of schools also receive additional funding to use at their discretion, guided by their school improvement or redesign plans.21

Progress monitoring is also foundational to the district’s school support and accountability model. All schools, regardless of tier, have a regular review of their improvement plans with instructional superintendents, who supervise principals and provide accountability as well as school support; this occurs approximately every four to six weeks, alongside typical weekly visits. Schools in the intensive tier have more frequent school visits, and after three years of implementation and monitoring, the district evaluates if the highest-priority schools—those that implemented a whole-school redesign model—are on course to exit that tier after year five.22 Ultimately, schools remain in the intensive tier for a minimum of five years so that the district can provide ongoing support for schools to make and sustain gains; however, schools may receive longer support or intervention if improvement goals are not met.

Analysis of DPS and Colorado school performance

The goal of this analysis is to examine how the performance of Denver Public Schools, all of which receive tiered district supports, compares over time with that of the state and peer schools across other districts. Given Colorado’s transition to new state assessments in the 2014-15 school year, data are restricted to the three most recent years of available state assessment results, from the 2014-15, 2015-16, and 2016-17 school years.23

Approximately 1,400 Colorado schools—about 120 of which are in Denver—are included in the analysis, excluding charter schools and alternative schools.24 On average, excluding Denver, Colorado schools represented 45 percent free or reduced-price lunch-eligible students, 31 percent Hispanic students, 59 percent white students, and 3 percent black students. Meanwhile, Denver schools represented 69 percent students eligible for free or reduced-price lunch, 56 percent Hispanic students, 25 percent white students, and 12 percent black students.

As part of the CMAS assessments, students in third grade through ninth grade take the Partnership for the Assessment of Readiness for College and Careers (PARCC) exam in ELA and math; they also take CMAS exams once in social studies during elementary and middle school and once in science during elementary, middle, and high school.25 This analysis is restricted to school-level ELA and math scale scores—which measure student performance at a point in time—as well as ELA and math growth scores, which measure student improvement over time. Since growth scores capture academic progress, they are important for determining school effectiveness, particularly for districts that serve more disadvantaged students and have lower scale scores.

Scale scores

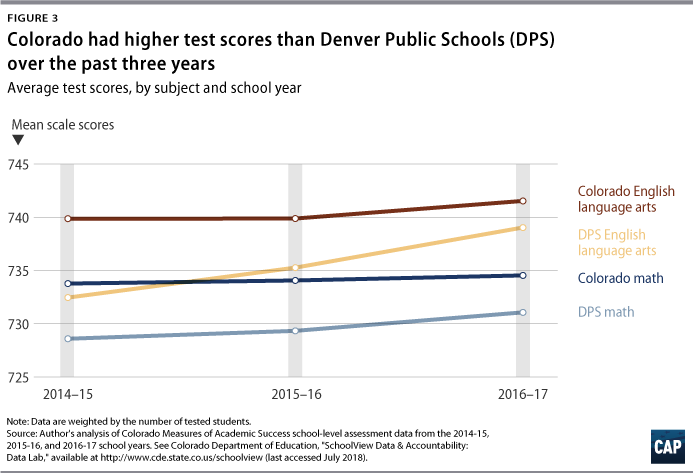

On the CMAS, students receive scale scores between 650 and 850, which are reported at the school level as mean scale scores, or the average performance of students in the school.26 Compared with raw scores, or the total number of points students earn on the test, scale scores adjust for slight differences in test form difficulty and enable comparisons across years.27 In the 2014-15, 2015-16, and 2016-17 school years, the state consistently outperformed DPS schools in ELA and math. (see Figure 3 below)

On average, these differences translate to Colorado schools, excluding DPS, outperforming DPS across all three school years by 4.5 points in math and 4.8 points in ELA.28 However, controlling for measures such as the race and ethnicity of students and poverty—using the percent of students eligible for free or reduced-price lunch as a proxy—Denver schools were associated with greater gains of 8.4 points in math and 8.5 points in ELA, relative to other Colorado schools.29

Growth scores

Colorado uses student growth percentiles in order to calculate how much growth a student made relative to his or her academic peers, which is determined by previous test score history. These scores range from 1 to 99. For example, a student with a student growth percentile of 75 improved as much or more than 75 percent of his or her peers. Schools report their median growth percentile, which is the median student growth percentile of all the students in the school.30

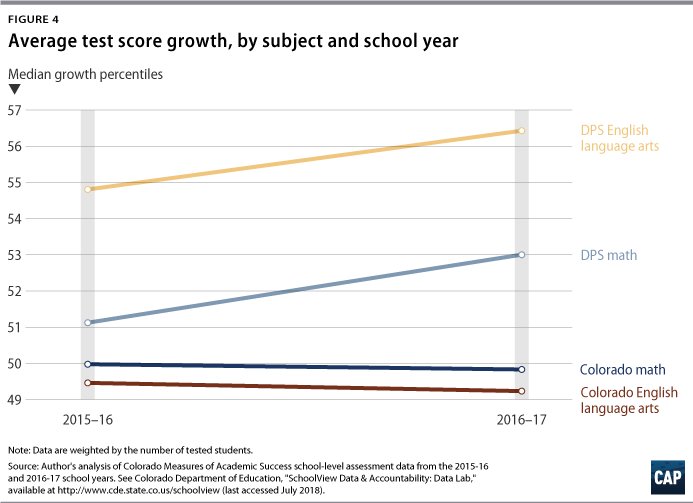

As Colorado administered the CMAS for the first time in the 2014-15 school year, growth data are available for the 2015-16 and 2016-17 school years. In both of those school years, all DPS schools showed greater growth than the rest of the state in ELA and math. (see Figure 4 below)

On average, these differences in growth translate to Denver schools outgrowing other Colorado schools by 2.2 percentiles in math and 6.3 percentiles in ELA.31 After similarly controlling for school-level measures of poverty, the race and ethnicity of students, and other measures, Denver schools were associated with greater gains of 5.6 percentiles in math and 7.4 percentiles in ELA, compared with the rest of the state.32

Analysis of DPS’ Tiered Support Framework and school performance

Strategic and intensive schools

To better understand how Denver supports school progress, schools can be analyzed through the lens of the district’s Tiered Support Framework, which organizes resources by the three tiers of support based on school need. DPS created the TSF in the 2012-13 school year in order to support both the district’s highest-needs schools and those in need of preventative support, in addition to schools that were on track for success. In the 2014-15 school year, DPS amended its policy so that schools identified for intensive support would remain in that tier for five years, regardless of improvements in performance. This policy was applied for the first time during TSF identification in the fall of 2015.

As examined above, in the 2015-16 and 2016-17 school years, DPS schools were associated with greater growth than the rest of the state. In order to understand which schools are driving this growth, one can unpack DPS’ academic outcomes by the district’s lower- and higher-performing schools through the lens of the TSF. Accordingly, this analysis will make a distinction between DPS schools that have and have not been classified in either of the more rigorous strategic or intensive support tiers over time.

Out of the 122 DPS schools included in the analysis, 52 were in the strategic or intensive tier at least once between the 2012-13 and 2016-17 school years. These schools will be referred to as the “strategic and intensive schools.” During this same period, the remaining 70 DPS schools were exclusively in the universal tier and will thus be referred to as the “universal schools.” Charter schools and alternative education campuses are excluded from the analysis because they have separate district support systems.

On average, the strategic and intensive schools included in the analysis represented 87 percent free or reduced-price lunch-eligible students, 71 percent Hispanic students, 15 percent black students, and 9 percent white students. Compared with universal schools, the strategic and intensive schools had significantly higher rates of poverty, greater shares of Hispanic and black students, and fewer white students.

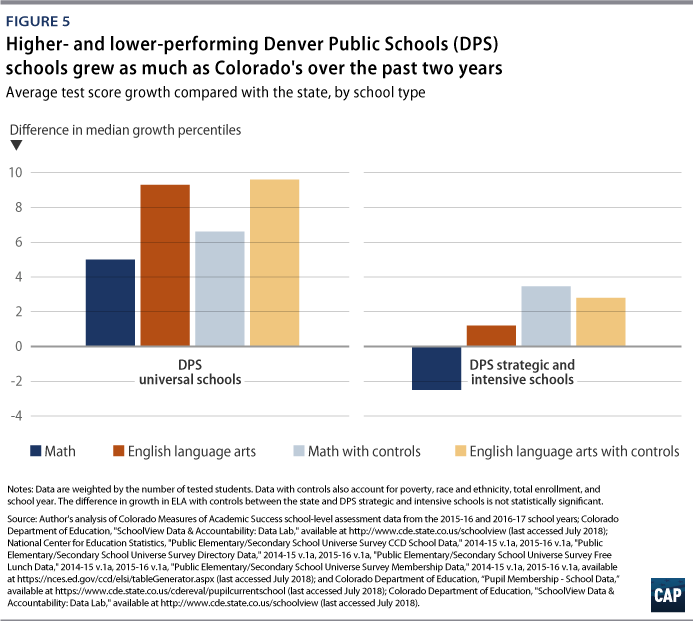

Like DPS schools overall, universal schools showed significantly greater growth, on average, than the rest of the state: 5.0 percentiles in math and 9.3 percentiles in ELA.33 Controlling for school-level measures of poverty and the race and ethnicity of students, among other measures, universal schools were associated with greater gains of 6.6 percentiles in math and 9.6 percentiles in ELA comparatively.34 The strategic and intensive schools grew slightly less than the state in math and slightly more than the state in ELA.35 However, similarly controlling for school-level demographics, strategic and intensive schools were associated with greater growth than the rest of the state: 3.4 percentiles in math and 2.8 percentiles in ELA, though the difference in ELA is not statistically significant.36 (see Figure 5 below)

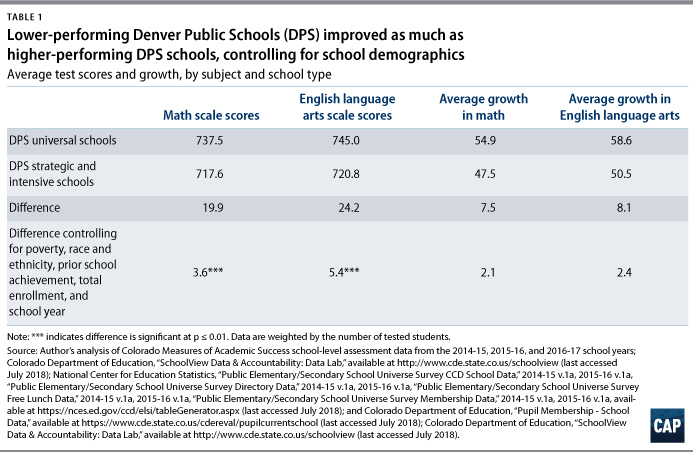

Within the district, universal schools had significantly higher scale scores and growth scores in both ELA and math than the strategic and intensive schools. However, after controlling for school-level measures of poverty and the race and ethnicity of students in the two groups of Denver schools, these gaps narrowed significantly. In particular, strategic and intensive schools were associated with as much growth as DPS schools in the universal tier that needed less rigorous supports, as differences were not statistically significant. (see Table 1 below)

Strategic and intensive schools compared with peer schools

Another way to understand the effectiveness of DPS’ support framework is to compare the growth of the strategic and intensive schools with the growth of similar schools across other districts. Accordingly, the following analysis will examine how performance varies across similar schools, distinguishing between those that do and do not receive TSF resources.

During the 2011-12 school year, the schools across Colorado identified for comparison had similar math and ELA achievement and demographics as the strategic and intensive schools. This analysis creates the peer group using 2011-12 data in order to identify comparison schools during the year prior to when DPS implemented the TSF. Ideally, then, differences between the two groups will be suggestive—at least in part—of the relationship between DPS’ support framework and student outcomes.

Schools in this section of the analysis will be restricted to those with six years of data from the 2011-12 school year through the 2016-17 school year. Since three-quarters of the strategic and intensive schools with six years of data available are elementary schools, this section will exclusively explore the performance of elementary schools.37 As a result, the following analyses compare 35 strategic and intensive schools with a peer group of 117 schools across 30 Colorado districts.

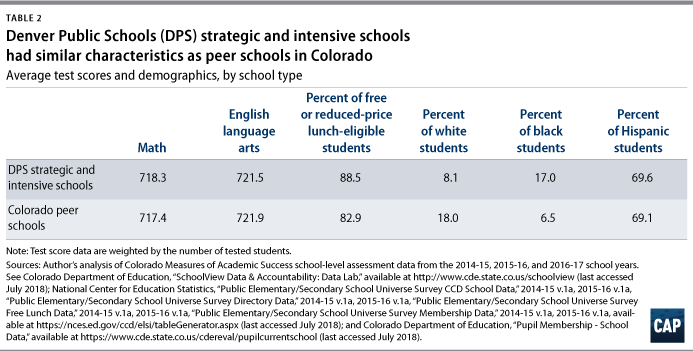

Using data from the 2014-15, 2015-16, and 2016-17 school years, Table 2, below, describes the two groups of schools. Both groups are close matches in achievement, poverty level, and percent of Hispanic students. However, because Colorado schools had significantly more white students and fewer black students than DPS over these three years, the group of peer schools in Colorado similarly had more white students and fewer black students than the strategic and intensive schools.

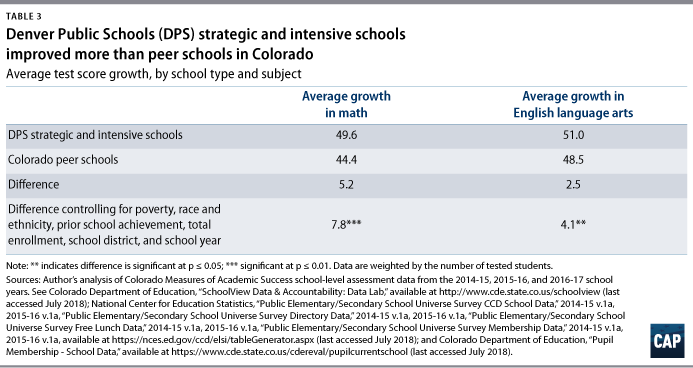

When comparing the group of strategic and intensive schools with the Colorado peer schools, the former was associated with greater gains of 5.2 percentiles in math and 2.5 percentiles in ELA.38 After controlling for school characteristics—such as school-level measures of poverty, the race and ethnicity of students, and prior school-level ELA and math achievement—these associated differences in growth increased to 7.8 percentiles in math and 4.1 percentiles in ELA.39 (see Table 3 below)

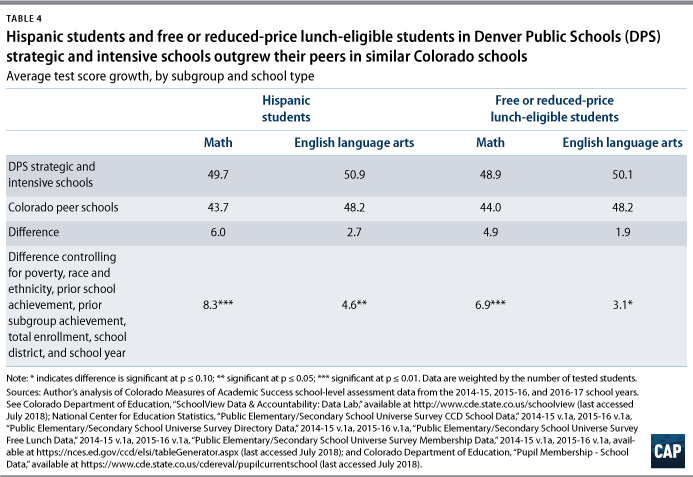

Receiving more rigorous supports was associated with greater gains of 6.0 percentiles in math and 2.7 percentiles in ELA for Hispanic students, compared with their peers.40 After controlling for school characteristics—such as school-level measures of poverty, the race and ethnicity of students, and prior ELA and math achievement of Hispanic students—these associated differences in growth increased to 8.3 percentiles in math and 4.6 percentiles in ELA.41 Similarly, receiving strategic and intensive tier supports was associated with greater gains of 4.9 percentiles in math and 1.9 percentiles in ELA for students who were eligible for free or reduced-price lunch, compared with their peers.42 Controlling for school characteristics increased these associated differences in growth to 6.9 percentiles in math and 3.1 percentiles in ELA.43 (see Table 4 below)

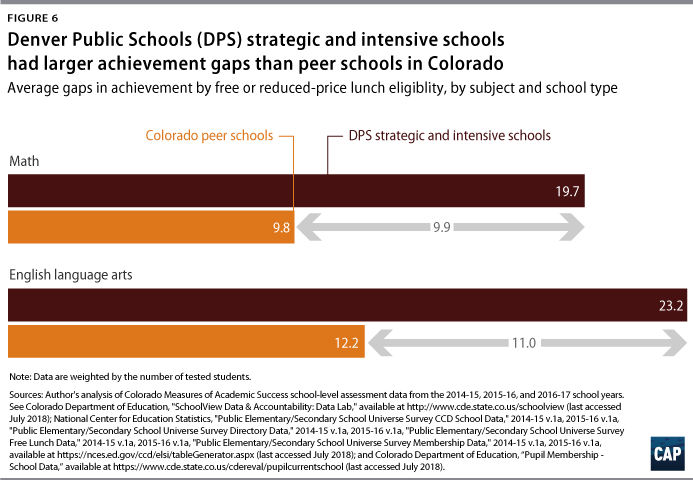

However, when compared with the Colorado peer schools, strategic and intensive schools were associated with larger achievement gaps between students who were and were not eligible for free or reduced-price lunch: 9.9 points in math and 11 points in ELA.44 (see Figure 6 below) Notably, this gap analysis is limited to schools that reported test score data disaggregated by both groups of students, which accounted for about half of the strategic and intensive schools and approximately 100 of the Colorado peer schools. Schools excluded from the gap analysis had too few tested students who were ineligible for free or reduced-price lunch to report their disaggregated data. For similar reasons, there was insufficient data to compare achievement gaps by race and ethnicity.

Conclusion

Denver Public Schools created the Tiered Support Framework in order to help all schools continuously improve while also supporting the district’s highest-need schools. Not only did DPS schools show greater growth than the rest of the state in ELA and math in recent years, but analyses suggest that Denver’s highest-need schools—those requiring preventative or more intensive support—made greater gains than similarly situated schools across the state. Additionally, Hispanic students and students eligible for free or reduced-price lunch in higher-need DPS schools showed greater gains than their peers in similar schools in the state—particularly in math. However, there is room for DPS to improve when it comes to closing achievement gaps based on student eligibility for free or reduced-price lunch. Furthermore, although there were insufficient data to analyze achievement by race and ethnicity, the district recognizes that these gaps exist and is working to close them.45

As states across the nation implement their ESSA state plans, districts must bring an extra focus on helping struggling schools make gains. To this end, districts can strategically deliver resources to schools by using an approach similar to Denver’s in order to better identify their needs. Importantly, districts should be intentional in including every school in their support system, promoting continuous improvement of all schools—not just those identified for intervention—and providing appropriate tiered supports. This method is a transparent way for the schools, teachers, parents, and other stakeholders to understand which schools receive which resources and why.

However, given the real needs of struggling schools, districts should not lose sight of those schools that states identify for comprehensive or targeted support and improvement under federal law. Indeed, district attention is still needed for the lowest-performing schools—especially given the district’s responsibility to create or oversee school improvement plans. However, as Denver’s approach shows, targeting resources to the lowest-performing schools does not have to come at the expense of continuous improvement for all schools, and vice versa. Accordingly, district leaders and other policymakers should consider creating a framework that supports schools across the spectrum of performance while continuing to provide critical resources to schools with the most needs.

To be sure, DPS gains cannot be attributed solely to its Tiered Support Framework, particularly given the multitude of reforms and improvement efforts that the high-capacity district has undertaken. However, the gains made by the entire district and by the schools receiving preventative or more intensive supports are suggestive of momentum in the right direction. Additional research on the Denver experience—particularly across school characteristics, such as school level and size—would help further illuminate how the district’s Tiered Support Framework may be a model for other districts as they support school improvement at the local level.

Methodology

Overall, this analysis compiled several different sources of data, including Colorado state assessment data, school demographic data, and state and district school ratings data. These data were used to analyze school performance during the 2014-15, 2015-16, and 2016-17 school years in Denver Public Schools, the rest of the state, and subgroups of schools within the district and state.

Using the Colorado Department of Education’s Schoolview State Assessment Data Lab online tool, the author downloaded three years of school-level and within-school subgroup-level CMAS assessment data in math and ELA for the 2014-15, 2015-16, and 2016-17 school years.46 The author also downloaded historical achievement data from the 2011-12, 2012-13, and 2013-14 academic school years.47

The analyses that compared DPS school performance with school performance in the rest of Colorado and that compared schools within DPS with one another included schools with data from the 2014-15, 2015-16, and 2016-17 school years. The analyses that compared DPS schools with peer schools across the state was restricted to schools with six years of data from the 2011-12 school year through the 2016-17 school year.

Assessment data were combined with data from the National Center for Education Statistics’ Common Core of Data for the 2011-12 school year through the 2015-16 school year.48 NCES data were used to determine the total number of students in each school, the percent of students who were eligible for free or reduced-price lunch in each school, and the percent of students by race and ethnicity in each school. Colorado Department of Education per pupil membership data were used to similarly describe schools in the 2016-17 school year.49

School-level data were matched with Colorado Department of Education performance plan data from the 2015-16 and 2016-17 school years.50 Denver school-level data were matched with DPS’ school ratings data from the 2015-16 and 2016-17 school years and with DPS’ historical and current Tiered Support Framework data.51

To create the Colorado peer group of schools, the author regressed the percent of students who were eligible for free or reduced-price lunch—a proxy for poverty—on math and reading achievement from the 2011-12 school year, controlling for the race and ethnicity of students, total enrollment, and school district, weighted by the number of students tested in a school and using robust standard errors. This regression model, which had high predictive power, was used to predict a school’s math and ELA achievement given its measure of poverty, controlling for the described variables. Colorado schools that had similar predicted achievement as the 35 elementary schools in the strategic and intensive schools group were selected for the peer group.

Analyses confirmed that, in the 2011-12 school year, the two groups of schools—the 35 strategic and intensive schools and the 117 Colorado peer schools—were similar in math and ELA achievement, poverty, and race and ethnicity. However, because there were more white students and fewer black students in Colorado than in Denver Public Schools during years analyzed, there were also more white students and fewer black students in the group of Colorado peer schools. Analyses also confirmed that, during the years in which the analysis compared their academic growth, the two groups of schools were similar on those same key data points.

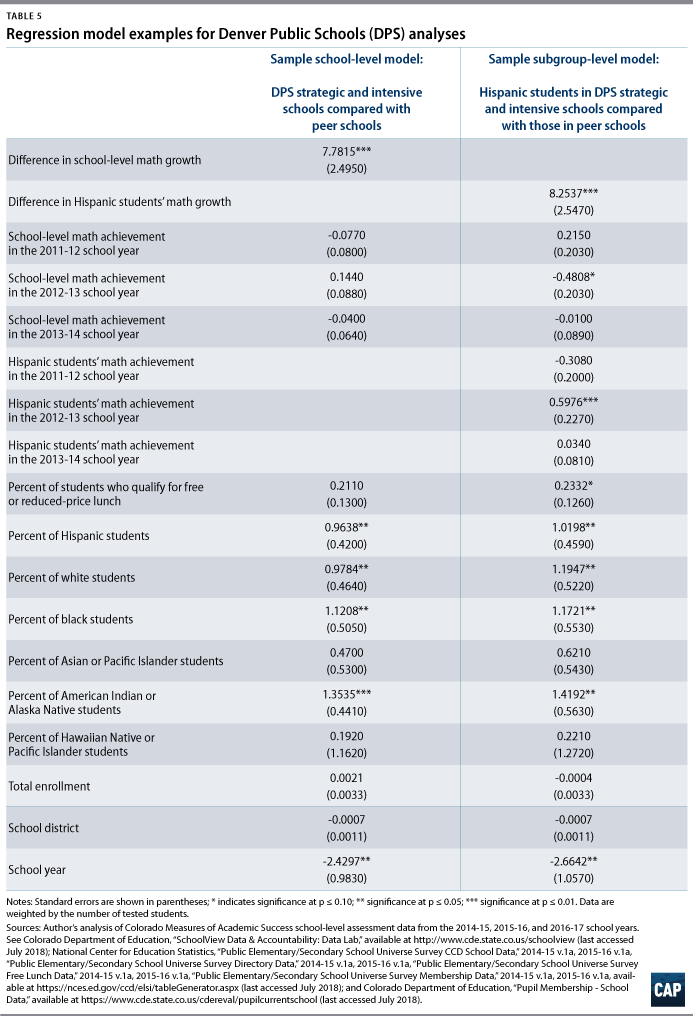

All regression models using multiple years of data weighted for the total students or subgroup of students tested in each school, as applicable; were clustered by school; and used school-year fixed effects in order to account for school size, within-school correlation, and year effects, respectively. Regression models controlled for total enrollment, the percent of students who were eligible for free or reduced-price lunch, and the race and ethnicity of students in each school, as applicable. Regression models also controlled for prior school and subgroup achievement in the 2011-12, 2012-13, and 2013-14 school years, as applicable. As indicated by the tables, most of the significant results were at the 0.05 level. (for examples of school- and subgroup-level regression models, see Table 5 below)

Additionally, the author collaborated with DPS’ Tiered Support Framework team on this report. As data analyses were based on the methodology described above, district analyses of school performance may differ.

About the author

Samantha Batel was most recently a senior policy analyst for K-12 Education at the Center for American Progress. Her work covered a variety of topics, including school and district accountability; standards and assessments; school improvement and school governance models; school finance; school climate and culture; the school-to-work pipeline; and school redesign.

Acknowledgements

The author would like to thank a host of individuals at the Center for American Progress who helped make this project possible: Erin Roth, the author’s favorite office mate, for her listening ear and generous feedback; CJ Libassi, formerly at the Center, for his instruction, guidance, and time; Rasheed Malik, a respected colleague, for his critical eye; Rob Griffin, also formerly at the Center, for his help during the conception of this project; and Scott Sargrad and Laura Jimenez, for their guidance and valued perspectives.

Additionally, the author would like to thank the following individuals for their support and feedback during various stages of this project: Chung Pham, Lauren Durkee, Amy Keltner, Dan Melluzzo, Maya Lagana, and Ann Whalen from Denver Public Schools; and Dan Jorgensen, from the Colorado Department of Education.